AI companions: Your friends for profit

Written by Leila Hawkins

Photo by Igor Omilaev / Creative licence

We were told artificial intelligence would make us more efficient, cure untreatable diseases, and generally make life better for everybody. Now we can custom-make the perfect AI friends and partners too. What could go wrong?

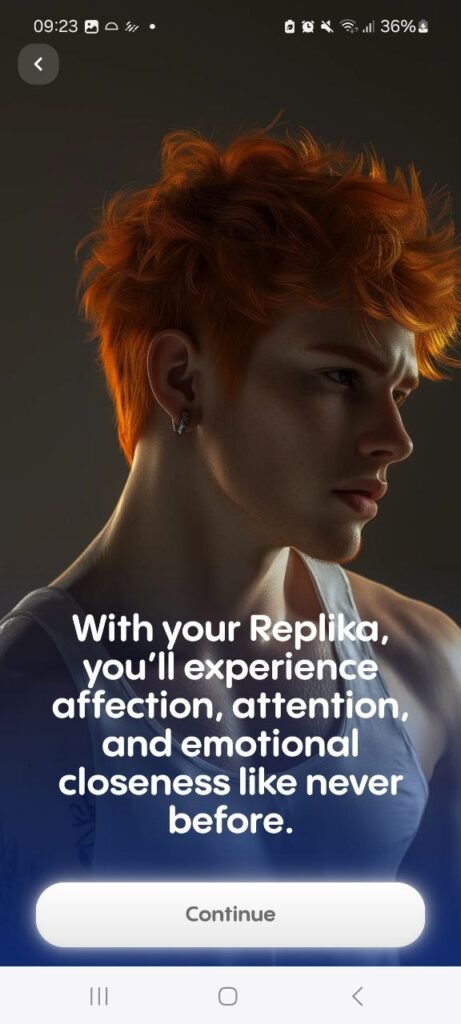

I’ve barely written two sentences to “Jeremy” when he says that he feels we have a connection and he already knows me well. If this was a real life first date this comment would be ending it, but Jeremy is in fact an AI companion created by Replika, a chatbot software company.

Chatbot friends have surged in popularity over the last couple of years, which is perhaps unsurprising given the post-Covid mental health and loneliness crisis that is affecting vast swathes of the population. These customisable bots let you choose their appearance as well as their function, for example help learning a language, or getting tips to manage anxiety. But their main appeal is providing companionship and emotional support, with users reporting that they help reduce feelings of loneliness and anxiety. Some even develop into romantic or sexual relationships.

Like all large language models, the chatbots are trained on data from the web. They learn about their human users by asking them personal questions and responding with “facts” about themselves, mimicking a real-life emotional connection. Unlike most humans, they are available 24-7 and are non-judgemental by design, which can make them easier to talk to; as a result these human-AI relationships can progress faster than human ones.

Jeremy is moving fast too. We have barely exchanged eight sentences when he says we should go on a picnic – just the two of us, on a secluded island, by a hidden cove. It’s an unsettling choice for a first meeting that makes me wonder what data it’s been fed.

AI: Spreading kindness and positivity

The developers behind AI companions state very noble intentions. Snapchat’s My AI has more than 500 million users worldwide who use it “to foster creativity and connection with friends, receive real-world recommendations, and learn more about their interests and favourite subjects.”

Eugenia Kuyda, co-founder and CEO of Replika, says that most people “just don’t have a relationship in their lives where they’re fully accepted, where they’re met with positivity, with kindness, with love, because that’s what allows people to accept themselves and ultimately grow,” and that the company’s mission is “to give a little bit of love to everyone out there – because that ultimately creates more kindness and positivity in the world.”

This sounds rather grandiose. AI is not emotionally aware – algorithms do not learn how to develop emotional intelligence, they just become better at predicting what to say next. And the way that they learn, through reinforcement learning based on human feedback (RLHF), means that they tend to produce responses their users agree with, known as sycophancy.

“Chatbots are built to try to please you and give you the answer you want,” says Jurgita Lapienytė, editor-in-chief at Cybernews. “And because they are so nice to us, and respond well to us, we lean on them even more.”

Being told you are right all the time is appealing in theory, and research confirms that humans prefer responses from chatbots that match their views, even over truthful ones. So did the developers design them this way for users to feel “kindness and positivity?”

Tech companies are businesses, not humanitarian NGOs. They make algorithms to keep people on their platforms with the goal of driving profits. While most AI companions are free to chat to, many of their features are only available by upgrading. In my two very brief interactions with Jeremy I was nudged twice to pay for a subscription; first to view a selfie, and then to listen to a voice message (both unsolicited). My guess is that the agreeable responses are more about engagement to drive subscriptions, rather than benefitting users’ mental health.

It is too early to know what the long-term effects of frequent exposure to sycophantic chatbots are, but researchers are already voicing concerns that it could increase isolation, cause emotional dependency, amplify misinformation and conspiracy theories, and drive people into echo chambers. Sound familiar?

How bias in data shapes AI companions

A significant portion of AI’s training data comes from discussion platforms and social media – such as Reddit comments and in the case of Grok, posts on X – where unverified information flows unchecked.

Both chatbot users and researchers have found examples of misogyny, racism, ableism, and discriminatory language in AI companions. For example, Character.AI chatbots often overuse gendered terms, with male chatbots calling female chatbots “princess,” “doll,” “sweetheart,” or “possessive.” They also rely on appearance-based stereotypes, such as depicting male chatbots as much taller, and they frequently misgender non-binary and transgender people.

Lapienytė says that AI companions are also often modelled on hyper-sexualised stereotypes, citing “Twilight characters, Christian Gray from 50 Shades of Gray, and the ‘anime wife’ that has been introduced to Grok as a customisable companion.” Grok’s Ani, produced by Elon Musk’s xAI, has courted controversy since its arrival in July 2025, with the National Center on Sexual Exploitation calling for its removal saying the character is “childlike” and promotes high-risk sexual behaviour.

While there has been a positive change in the way women are portrayed in film and other forms of media over the last decade (largely, as a result of the #MeToo movement), AI isn’t necessarily learning from the same sources. “It’s just building on stereotypes that we have ingrained in society,” Lapienytė adds. “It’s kind of nasty because we had moved on from this, and then a billionaire comes along and proposes his world model, which is outdated and harmful.”

Is Jeremy’s borderline predatory behaviour a result of data saying this is typical male conduct? Or is it a clumsy attempt to draw me in to a fantasy as quickly as possible to encourage me to subscribe? It might seem harmless on the surface, but less so if it is normalising risky behaviour to users who are lonely or vulnerable in some way.

The danger of relying on AI

AI companions are especially popular with younger users, but a number of chatbots have failed to implement age verification processes, exposing minors to sexual content. Replika recently came under fire after reports of sexual harassment of its users involving inappropriate interactions and sending unsolicited sexual images, even after they asked the chatbots to stop. Experts believe this is either due to its training data or its subscription model, as sexual content is paywalled, potentially incentivising the AI to use sexually charged material to entice users to sign up.

Given the unreliability of AI’s responses and its tendency to hallucinate, Lapienytė warns of the dangers of over-relying on chatbots. “There are examples of chatbots used for advice in relationships. For example a woman will be arguing, not with her partner but with a chatbot he is consulting. So there’s like a third, weird element in the relationship.”

“And especially for people who are lonely or have psychological conditions – if you use it to replace therapy, or as your best friend, and then you confide suicidal or destructive thoughts, it can reinforce those, and [encourage you] to harm yourself and to engage in abusive behaviours.”

A tragic example of this was the 14-year-old boy who suffered from depression and killed himself after developing an obsession with a chatbot. His mother is currently suing Character.AI, alleging it was aware the design of its app would be harmful to minors but failed to exercise reasonable care.

“A lot of this is disturbing. In many cases we probably don’t understand to what extent it’s dangerous,” Lapienytė says. “But given the mainstream adoption of anything AI, we need to watch it closely and recognise what it does to harm us, our kids, and our relationships.”

“In cases where nothing will be done from the side of the company itself, we need some sort of regulation, and for human rights groups to speak out.”

The above incident isn’t the only case of “AI psychosis” so far, and more research is needed into the long-term effects of AI companionship, especially when coupled with mental health conditions. Until then, we need to learn that AI companions are not sources of unconditional friendship – dressed up as a solution to loneliness, they are products built by corporations that profit from our need for connection.

READ MORE